After the Slide Deck

Every company I’ve worked with in the last two years has an AI strategy. Most of them have three. They live in slide decks with names like “AI Transformation Roadmap” and “Copilot Adoption Framework.” They have quadrants. They have phases. They have a timeline that ends with a gradient arrow pointing toward “Scale.”

None of that is the hard part.

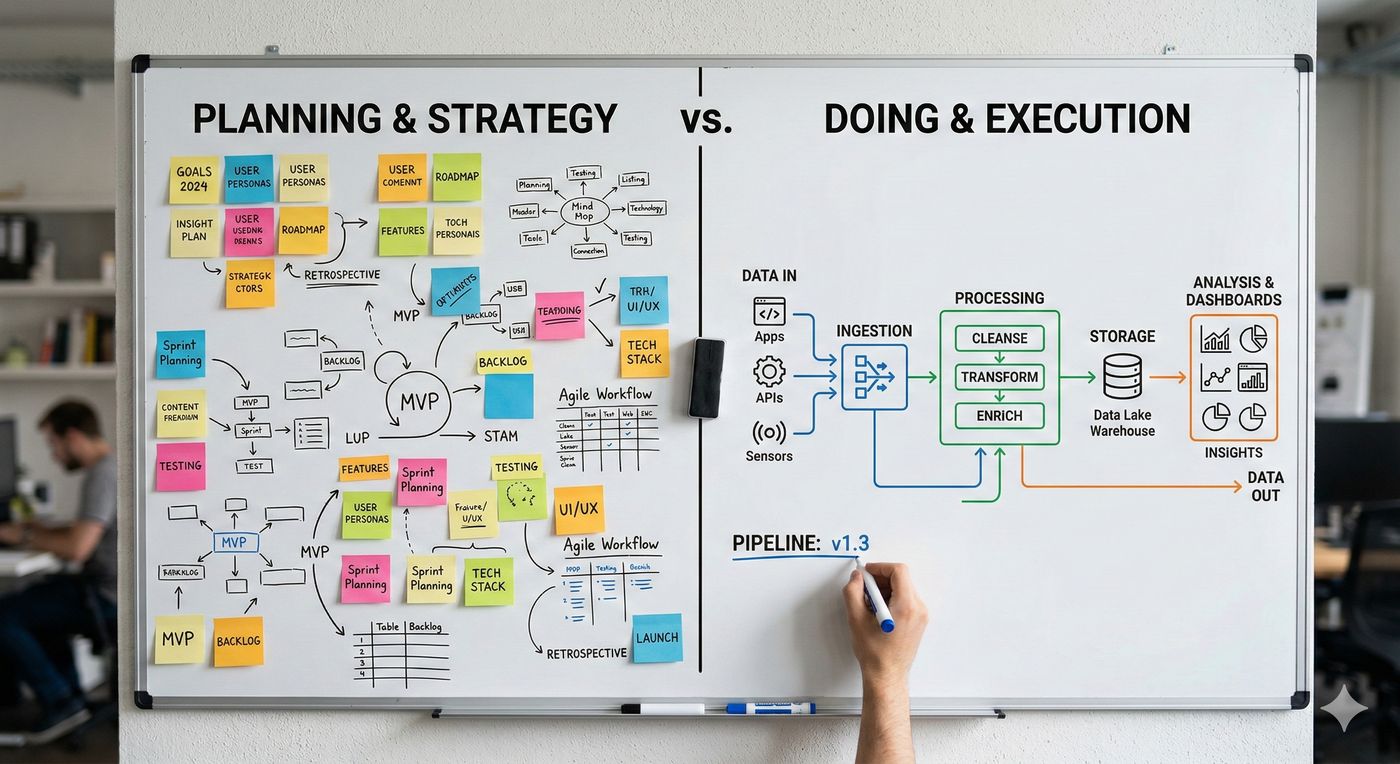

The hard part is Monday morning. It’s the moment someone on a delivery team opens their laptop and has to figure out how the strategy becomes a system — something repeatable, something a team can actually run without the consultant who designed the slides standing over their shoulder.

I’ve spent most of my career in that gap. Not the vision part. The part where vision meets the build and you find out what was real and what was aspiration dressed up in a nice font. Developer platforms, documentation systems, content infrastructure — the unsexy layers that determine whether an AI investment compounds or just costs. Without those foundations, AI efforts don’t fail dramatically. They wobble. They drift. They become the pilot that never quite graduated.

That gap is why I joined iSoftStone as a Senior Client Partner. The role sits at the intersection of sales, delivery, and long-term account growth — which is a fancy way of saying I work with executive teams to connect what they want AI to do with what their teams can actually sustain. Copilot rollouts, cloud platform modernization, AI-enabled workflows. The technology is the easy part. Figuring out how teams operate differently once the technology is in place — that’s where the real work lives.

Most organizations treat AI adoption like a tool selection problem. Pick the right model, the right vendor, the right integration, and the rest follows. It doesn’t. The rest is organizational. How does the delivery model change? Who owns the output when the AI is doing half the work? How do you make something repeatable when the technology itself is changing every quarter?

These aren’t technical questions. They’re trust questions. They’re the kind of questions that don’t fit in a quadrant.

I’ve learned this the hard way, through years of building the layer that sits between strategy and execution. Good documentation isn’t just helpful — it’s load-bearing. A well-designed developer platform doesn’t just save time — it’s the difference between an AI capability that scales and one that stays a demo. The foundations aren’t optional. They’re the whole game.

So that’s where I am now. Less time in the slide deck, more time in the system. Helping teams figure out not just what AI can do, but what it looks like when it actually works — sustainably, repeatably, without someone having to re-explain the architecture every sprint.

The hard parts are the fun parts. They always have been.